Building a Private Family Assistant: From Monorepo to Cloud Run

Today was a high-velocity day for the Tolan Agents ecosystem. We’ve officially moved from a single-repo prototype to a robust, scalable, and secure polyglot monorepo architecture.

Senior Cloud Engineer - Google Cloud Platform, from Ireland

Today was a high-velocity day for the Tolan Agents ecosystem. We’ve officially moved from a single-repo prototype to a robust, scalable, and secure polyglot monorepo architecture.

Every developer has a “Day the Music Died.” Mine was April 6, 2026.

This is what I built the app for. In Build 00059, I finally implemented the “Advanced Growth Analysis” engine.

Once the app was live, I had a problem: I had five years of height data for my oldest child sitting in a Notes app. Typing those in one-by-one was a non-starter.

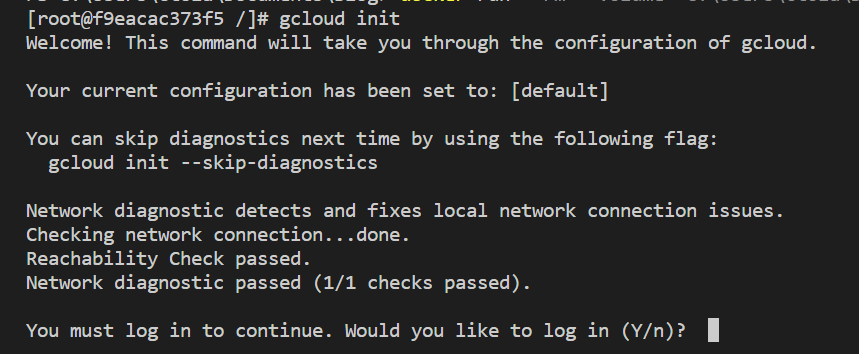

“It works on my machine” is a phrase that should haunt every developer. The real test of a project is when it lives on the public internet. For Growing-Together, I decided to go “all-in” on Google Cloud Platform (GCP).

Every developer has that one “itch” they need to scratch. For me, it was tracking my kids’ height. Sure, there are apps for that, but none of them felt right. They were either cluttered with ads or lacked the specific “sibling comparison” logic I wanted.

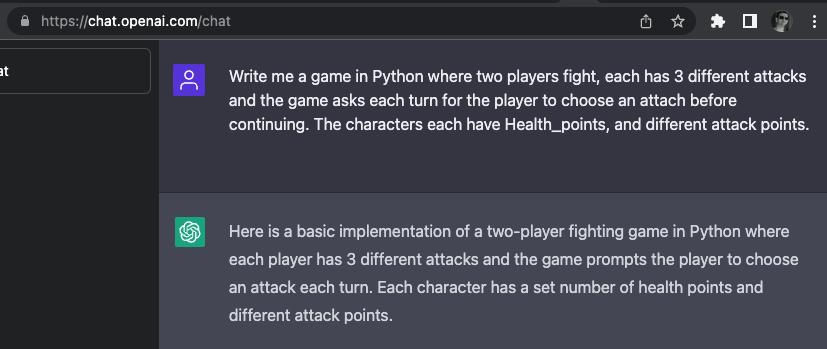

Draft - Hello, First post of 2023 and its a good one. I am creating a Python based figthing game together with/for my son. With all the discussion of ChatGPT I decided to give it a go and see what it can do.

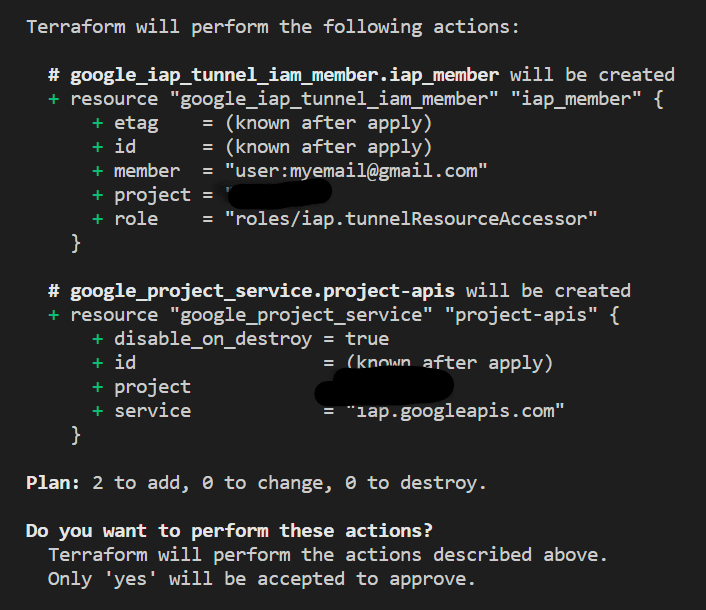

Hello, The most obvious thing to try to do next is to SSH to the VM, but I do not want to be able to connect to these VMs openly over the internet. So I will use Google’s Identity Aware Proxy (IAP) to tunnel into the VMs without having to setup complicated VPN tunnels or opening firewalls to the internet.

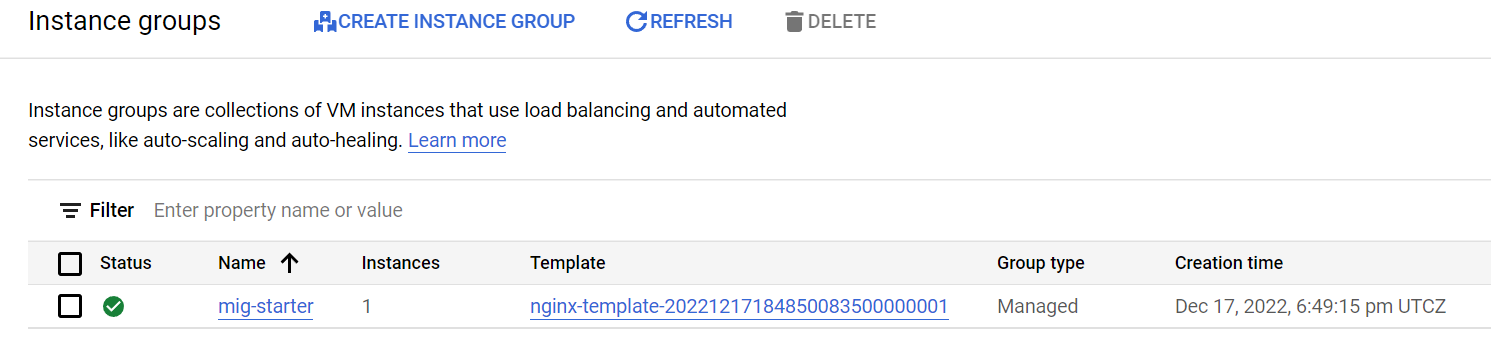

Hello, so I’m thinking about what exactly to deploy, I have a few good ideas from recent work tasks but I would need to develop a few more bits of supporting infrastructure and services. So I am not sure what order to proceed. Today I’ll start with deploying a managed instance group (MIG). If you know AWS you can think of this like an auto-scaling group. Basically it is a set of VMs that are managed as a group to provide highly available services.

With Terraform connecting to my GCP account I am able to start focusing on the infrastructure as code part of this project and less on the technical connectivity and setup which have been the focus of the previous posts.

Next thing I want to get working is Terraform Plan against my GCP project. I quickly swapped out of my blog volume to a Code folder where I will keep my .tf files.

Quick post - this page might become a page of tips.

Picking up from the end of the last post I needed to include my Git config inside my container so that I do not need to leave the container to use Git. I do this by mounting my .gitconfig file from my laptop into the container where it will be used while running in the container. This is how I get the right mix of disposability and persistence between my laptop and working container environment.

Time to start a new project - frequently while at work after finishing a piece of work it strikes me that this would make a great blog post. While I can’t directly write about what I do at work, I realized I can spin up a similar project on my own time and not risk giving anything away. So here we are.

Finally I took the time to do a post on the completed monkey bar project. I am very happy with how it worked out. I learned a lot and got to use other skills that I don’t usually get to in my day job.

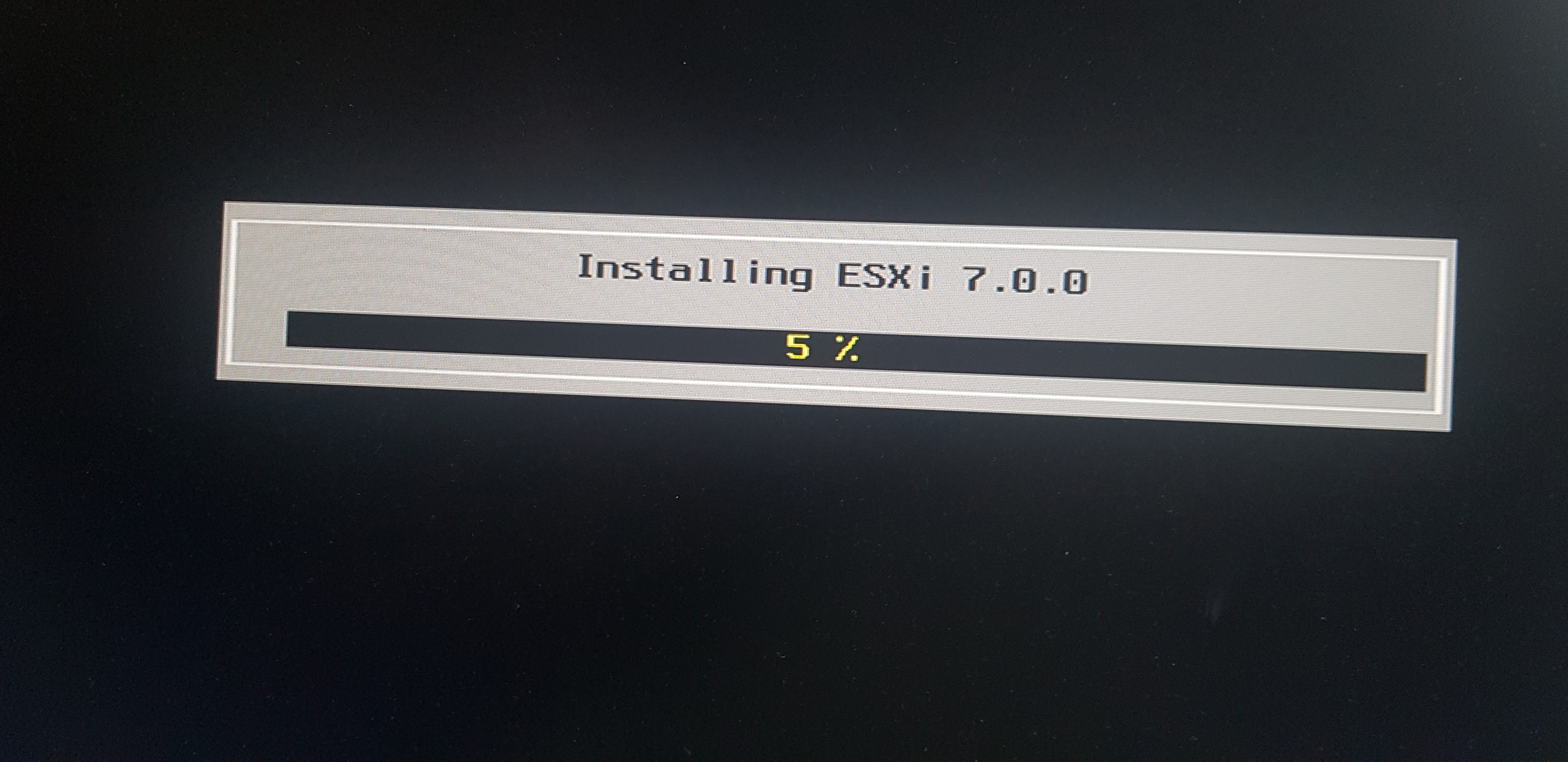

As I had hoped it was actually quiet easy to get the vCenter installed and I did infact know what I was doing, mostly. I knew I’d need to get an ISO from VMware for the VCSA, I downloaded that and created a VM before having created a datastore. This is something I’ve really apreciated about the build, all the parts work out of the box. My thanks to those who have gone before and figured all this out. Hat tip to VMware and Intel for making it happen on the product side, so take my money.

So yay, my NUC arrived, it is tiny and I’m really happy with how quiet and tidy it is. It’ll happily sit on a shelf out of the way. I’ve worked with ESXi for many years now but i rarely get this hands on with hardware or do initial standup of enviroments. So this is a project that will fill in some gaps for me, I really hope I know what I am doing or it’ll be embarrassing.

Hi, I am back and will start creating and publishing content for this blog again. I have been away mostly due to finishing my Masters thesis, taking a little time off and changing jobs in August, I moved from Liberty IT to Workday and I am happy to say it was a great move. I had a great experience in Liberty IT and would probably not be where I am now if it was not for my 4 and a bit years there. The was they treat their staff and encourage growth was a real eye opener for me after coming from a semi-state organisation where things were let us say slower moving. I have settled in and learned a lot already with so much more ahead of me.

This is just quick post to talk about a great 3D sketch tool that I came across last week. I used to use SketchUp but that is not free anymore, I tried a couple of others, but they didn’t click for me. It needs to feel intuitive when you are starting from scratch.

As I mentioned in my initial post on this topic (eek nearly two months ago), for college we were asked to create a Chaos Monkey like script to test out HA implementation. That part of the module was teaching us about good decoupled design using message queues and the different strategies available when designing how one system will talk to another. The aim was to demonstrate how with a properly configured Auto-scaling-group (ASG) and a decoupled communication approach the system should be resilient to failures.

For college we were asked to create a Chaos Monkey like script to test out HA implementation. This was a great project to work through, I used the AWS python SDK Boto3. Quite a small learning curve and I think I can cover the bones of it in one blog post once the assignment is handed in. A real world addition to causing chaos was to time the recovery and send a message either via a lambda or via a SNS topic. This took a little fiddling but again it was great to get hands on with a “real” problem to solve.

Complexity in a system cannot be destroyed. It only gets moved around and hidden.

Day 3, ok so I must confess I have been away from this project longer than I expected, but to be fair I have my current class loads as an excuse. Right now, I’m opting to do this rather than read another journal article on the state of the art in serverless. For those who care “Cloud Programming Simplified: A Berkeley View on Serverless Computing” was really good, hot off the presses released last month.

As I signed off yesterday I realised I still have content from last week that I want to capture here. Firstly, I’ve kicked off a new project, I know I keep doing that, but this one seems to be a really good way to practice my skills and give back to the community. As I hinted at back in January, I have started writing Pester test for the VMware VMC Module because, well the there wasn’t any. Since, I would like to contribute code to that module (some of the things we’ve created in work), then the best way to build trust would be to write tests for the existing code and then when I add my code it will be demonstrable non-breaking to the existing code. This will hopefully get our team into the main VMware Code repo which would be very cool.

Ugh, I can’t get into the “zone” at all today. I guess it is ok, I will be on again all week. I am starting to feel the pressure of College assignments weighing down on me again. Pretty sure I’ve done due in two weeks, plus the labs from last week. I’ve one lab done, but I want to get stuck into the kubernetes one. So what else has been going one, I was up in Belfast for the first time this year for our Town Hall and that was too short a visit, it was awesome to get recognition for the AWS certification we did last year though. Everyone who achieved a cert was gifted an Amazon Echo Plus! That’s my second Echo to join with my Google Home Mini, so ambient computing is spreading across the Tolan household. I enjoy that my kids are totally comfortable with asking Google for things like turning on the TV and Netflix. I also get to see the shortfall of voice communications, you can’t do auto-complete with Voice you can’t start with “Gon-“ and get both Gon the Netflix cartoon for kids and Gone the drama for adults. No Google assistant makes a guess based on algorithmic probability (or however), and so Gon the dinosaur cartoon is impossible for my kids or me to call up via voice. Interesting observation, if nothing else.

Who am I? What am I? What do I do? Does it matter? Last autumn a colleague and I were asked to do some research around our job titles, it had become clear that our day to day work had changed significantly and we no longer considered ourselves classic system administrators but if this is true, what were we? Coupled with interesting job titles like DevOps Engineer, Site Reliability Engineer (SRE) and Infrastructure Developer emerging in the market, we were eager to see who we were. So, we set off to investigate the desirability and suitability of a title change.

WIP Post Stub

Woohoo, I have gotten my first ever open source contributor credit. This week I was referring to the way that project validated parameters in Pester when I thought about my code contribution back in September (wow really September, it if wasn’t dated I wouldn’t believe it). Take a look at my original post on this for more detail.

Day 2, I decided that it will be most beneficial to more people to see how to get this into Azure DevOps rather than going into writing application code for an application that no one really cares about. So here we go. I have re-opened Visual Studio and opened my project. Why not “Launch” it again to make sure you have working code to start with.

I just did a count and I’ve done 50 posts already, this makes 51. I know I have barely scratched the surface of the topics I want to cover so it is great to think that I’m nowhere near done with blogging. I am sitting down to write Day 2 of the Azure pipeline project and once that is done I will sprinkle back in some AWS study. College has kicked off and it will be AWS focused so what a great to double down and get the certified. Disappointingly I have not done nearly as much Smart Home work as I’d hoped, I’ve a post in me to complain about the cheap wifi bulbs I got from AliExpress, all of which burned out within 3-6 months, ugh. That said I’m sitting in a room with two bulbs from other brands that are still working away. I should really contribute back my experience with smart bulbs, but it isn’t a priority and I need to focus on my priorities to get the most done.

Ok, so let’s start this project. Today we will get right into it, we’ll get setup with the starter app, our GitHub repo and open it up in our IDE Visual Studio. First get Visual Studio Community from Microsoft’s free resources page. Download and install it.

Tonight, I started back in College for semester 3 of 4. A much shorter break this time around than between semester 1 and 2. The modules look very interesting and I totally called that since last semester it was Azure that this semester would be AWS (I mean they are the main players). We have Enterprise Architecture Design and Enterprise Architecture Deployment, both AWS focused and both very promising.

I am going to do some thinking out loud as I wait for my delayed flight to board. I have been thinking about the year ahead and what I want to accomplish, but I have too many ideas that all seem valid objectives. I came across The Law of the Vital Few in Deep Work by Cal Newport, this is also known as the 80-20 rule or The Pareto Principle. I want to be clever in what I commit to knowing that the majority of the benefit should come from just one or two of the most important projects. If I over commit to things I am taking effort away from another project - how much project time I have is a zero sum game. So the more I commit to the less I’ll accomplish, this is difficult to accept.

I wanted to point to an opensource project that is very well hidden, VMware.VMC is the opensource VMC PowerShell module. You can easily find the module at the PowerShell Gallery but I could not find it on GitHub, I was just about to email Alan Renouf to ask him about creating a project when I took one more look to be sure I wasn’t asking a stupid question. Turns out that it is opensource but it is hidden in a PowerShell example script project. Really not where I expected it.

For the past week plus I’ve been developing a custom module for Ansible. Wait I should back up a bit. We are looking at using VMware VMC on AWS an amazingly promising service were VMware partnered with AWS to manage the underlying hardware for a managed vSphere environment as a service. They manage the hosts, they stand up the vCenter and you use it as a service - pay for what you need as long as you need it.

Here it is, the start of the Azure Pipeline project series that I’m going to publish for others to follow along. This was a college assignment which I particularly enjoyed and found useful in the new age of DevOps. I found it so good I felt compelled to share it. It is hosted on Azure and can be done entirely on the free tier, for my college project I used some paid features but I’ve rolled those back now and it is still as good as ever. This series will walk through all the steps to get your own CI/CD pipeline up and running on Azure for free. This is a great opportunity to get hands on and learn the fiddly bits yourself, in may large organizations these details are kept behind the curtain and you miss out on knowing how they actually work (it is easier than you probably expected). So let’s jump in with an opening post setting out the App and the Ask, I will follow up with the first steps post shortly.

Ah yes January, when everyone including myself, rushes back to the gym to start off what will undoubtedly trail off sooner or later. Still I don’t think it is pointless to try. In most cases it is an experiment to see if you can hack it or not. Last Jan I had been listening to so much information about the benefits of fasting that I took the opportunity to give it a prolonged fast a whirl, and a year later I’m delighted I did. I repeated the experience this year with another 4 day fast (102 hours all in).

My first task of this 2019 was to submit a paper which we had extended till today, so I submitted that last night. Next up, now that I’m free and clear of course work is to revert my blood pressure app back to the free tier. I got a bill of just under 60 euro for my Azure usage towards the end of last year.

All done now, well mostly. Friday we presented our final assessment in the last day of the semester. I had an additional presentation to complete because I missed the last on campus day with being in Japan. They all went well and now I can focus on getting some of what I learned down on this blog. I’ve a backlog of ideas I need to work through. Great problem to have. As I said I’m mostly finished I still need to complete a state of the art type research paper and right now I’ve no idea what topic to look into. Hanging in the back of my mind is that I need to start working towards my thesis project/research but I’ve no strong idea for that yet either…

“Turning on the Christmas” I love it still enjoy spotting the gap in Google assistant AI not really knowing what’s going on even if it does exactly as it was asked. My battery for my IR wall socket remote control died, luckily I have a few SONOFF wifi switches laying around so I decided to upgrade my remote control of the Christmas lights! see a demo here on YouTube

Most of the Ansible stuff I see out there online is for deploying developer VM instances into existing VMware infrastructure, but we need to use it to manage the VMware infrastructure. For example, the Dynamic Inventory script only returns the Virtual Machines, not the hosts, does this push me into maintaining hostnames manually? We have over 1000 ESXi hosts and we need configuration management to make our lives better. Adding and removing are not as smooth as they should be if we had better automation. It is my goal to get us further along this road, first things first we need to capture the exiting configurations as code.

Configuration management is going to be a major focus for us next year, we are currently weighing up Ansible versus our own developed scripts in PowerShell. PowerShell was my first choice, but Ansible seems to be worth investigating. There are a fair number of modules for VMware/vSphere out there already and we need to see if they meet our needs. The other option is to use PowerCli and to target the settings we care about. Since Ansible was designed to get and set configuration items it will be interesting to compare whether the learning curve is justified by the pre-existing art or complicated by forcing us into the mold.

In this post I will show you how I used LetsEncrypt to create an SSL cert which I was able to then apply to my Azure Web App. This meant I was able to use my custom domain name with https without the security warning caused by the wildcard Azure cert not matching my domain.

I got there in the end, I’m calling done for my pipeline. It meets all the requirements with a couple of extras that I hope add enough for a great grade. I put so much time into this because I really wanted to crack it, in a lot of ways this is my new niche. Coming from a Systems Admin/Engineer background handling the deployment of code to the runtime is my wheelhouse. The pipeline also closed a knowledge gap that I had, I must admit I didn’t know exactly the deployed app emerged from lines of code to a built app that was then deployed.

We had an interesting conversation today in the office about teaching computers in school. We have a CSR program where we go and show kids what it is like to program computers and it got us talking about where we learned about computers in the first place. For me it was not at school, I learned about and fell in love with computers at home. My parents bought one of the old office computers after an upgrade. So I have a 386 with a 40MB hard drive and sheesh I don’t know how much RAM, I guess only MBs of RAM if the HDD was only 40MB. That I clearly remember because I constantly had to free space for newer games from the floppy discs of computer magazines. Ah memories.

I have been quite impressed by SonarCloud, I did not have previous experience with SonarQube so this is all new to me. As I’ve mentioned in a few posts, we were asked to implement quality assurance into our pipelines with N-depend or SonarCloud. I went with what I guess is QAaaS? SonarCloud as a hosted service allows me to check the quality of each build and I added tasks to the pipeline to break the build if the project does not Pass.

After several days bashing my head against my Azure DevOps pipeline, I finally got the Selenium tests working. Hindsight being what it is, it seems easy to understand now but I struggled. I knew that Selenium tests needed to be run against a deployed WebApp so I was putting them into a stage in my Release pipeline, but kept running into “no test assemblies found” errors. Actually, as I write this I realise I should get the error message correct in the hope that I can help someone with the same problem.

Back in work today and gradually getting over the post holiday shock. I have to say it really hit home when I sat on the freezing cold toilet seat at 4am. What is this the stone ages, why are they not heated like in Japan? I might just have to start a side gig importing them to set right what is wrong with this situation.

The reason I have not been posting is twofold, one I’m in Japan on holiday and two I’ve had a college assignment due that ate up all spare laptop time. Right off let me say Japan is amazing, anyone thinking of going should. Do not let fear of the language barrier hold you back, we’ve zero Japanese and we’ve managed no problems at all. All the important signs have English and Google Maps takes are of getting around. It knows where you’re going and it know where you are – problem sorted.

I got an email last week seeing a testimonial on the impact the DevOps MSc has had on me. It was an opportunity to reflect so I took it. It is an interesting question of the chicken or the egg. Was it learning the material for the MSc that spurred me to the fore at work, or was it my need to be at the fore in work that made my desire to understand DevOps so visceral?

Woop! I finally got tags working for my blog. The GitHub pages flavour of Jeykll is a special one so not all you read on the internet works out. I think I was following and backing out fourth or fifth blog before I struck on one I was sure should work. Long Qian has a good walk through of how to set everything up. My setup didn’t exactly match his, but his obvious successfully working site running right out of his public repo kind of smacked me in the face. It is as I say, if you don’t know something it is your fault, because all the information is out there.

For this semesters college project we are tasked with implement continuous software delivery through a pipeline performing unit test, integration tests, user acceptance tests and performance tests. It is a great project, I’m actually excited to get to do it. We have been given a mostly completed C# application that calculates your blood pressure and we’ve have to complete the program, add a feature of our choosing and create the continuous delivery pipeline with all the bells and whistles we can muster.

I had some idea, but really no idea just how complex Search Engine Optimisation (SEO) had gotten. I have added Google Analytics to this site for insight and experience. I’m only starting out so as expected I’ve next to not readers, but with my PowerShell Lambda posts coming within a month of their publication I thought maybe I’d be higher in the organic ranking for the specific keywords AWS Lambda PowerShell. Boy was I wrong.

I executed the function from the main page once created and it works!

Friday night I slept out with Focus Ireland and their Shine a Light campaign. It was quite the eye opener and something I will never forget. I am delighted to have exceeded my goal of 500 euro with a final count of 660, I am over the moon.

Friday night I slept out with Focus Ireland and their Shine a Light campaign. It was quite the eye opener and something I will never forget. I am delighted to have exceeded my goal of 500 euro with a final count of 660, I am over the moon.

A month ago now AWS announced Lambda support for PowerShell Core, this is awesome and something I am certainly going to learn to use. PowerShell is my go to language in work but when I move to web platforms PowerShell seems so far away. Hopefully these Lambda’s will be able to bridge writing in my native tongue while away from home.

There is a lot I want to write about Functions as a Service (FaaS), and I don’t know whether to start with a dermo or explanation. Since I don’t have a demo ready right now I guess I’ll go with an explanation of why I’m so excited by the idea.

Back at my AWS Certified Solutions Architect - Professional prep reviewing the Whizlab practice exams.

I know I am a geek when I google this sort of stuff and find it interesting and educational. I use the term Pipe all the time for the | character, it is a fundamental character in PowerShell, you can’t get far without it. I learned it Don Jones and Jeffery Snover from watching the Microsoft Virtual Academy videos back when I was first getting certified. They used it, they even explained it in the entry level courses and I guess it is clear that it is called a Pipe because it looks like one?

On October 12th I will be taking part in Focus Ireland’s Shine a Light event. It will be quite the experience and the long range BBC weather forcast looks like rain!

I recently stumbled across Mark Schwartz again as Jude is reading two of his books that I highly recommend for anyone working in IT and Agile, The Art of Business Value and A Seat at the Table. His writing challenges whether we know what we really mean when we say that Agile delivers more business value than waterfall. Sure people say it all the time, but what does it mean, and how do we know?

Tonight’s lecture was all about communication, the different methods and approaches that lead to better outcomes, better communication of the message. It is something we do all the time, but how often do we do consider if we are doing it well?

Short one today, I spent a bit of time trying to solve a minor problem with my markdown and I learned a bit along the way. I am still getting to know Markdown and that there are in fact many flavours of it not all supported everywhere.

Picking up right where I left off yesterday Crap again I’ve not done any testing, I have come too far now not to keep going. I took a look at their guide for writing tests. Come on seriously?

Yesterday was a big day for me, inspired by the talk from Maggie Pint at Microsoft’s Leopardstown campus on Monday, I took another look at contributing to an open source project. I know I am fluent in PowerShell, so surely there is something out there that I can help with. The talk by Maggie informed me of a practice among the open source community of tagging entry level issues as “up-for-grabs” so I search GitHub for “up-for-grabs”+”PowerShell” and obviously I got 100’s of hits. I eventually came across a project that I recognised, JiraPS. We use the Atlassian stack in work and I’m familiar with the Jira APIs because I’ve written functions for personal use to speed up our Sprint Planning and Refinement ceremonies with hardcoded team values etc.

I’m going back back to Azure Azure! We had the first class in the Continuous Software Delivery module last night and it was both thrilling and terrifying. I’m going to have to write come C# code, but it is not the focus of the course just a part of it.

Last night i got back into my AWS Certified Solutions Architect - Professional prep with the Whizlab practice exams.

Tuesday I was back in college for semister two of my Masters in DevOps. The two modules are Continuous Software Delivery and Human Org Skills. These will run between now and December and we’re off at pace, the first assignment was handed out already.

Well yesterday was fun, I got to go to the Software As Craft event in the new Microsoft buidling in Leapardstown. The main draw for me was to hear the author of Accelerate speak. I have listened to the audio version of that book more than once and highly recommend it for anyone still arguing with colleagues about what measures are most effective or worse if you even need to be Agile and do DevOps.

That took too long! I have spent quite a bit of time today trying to fix my broken Home Assistant setup. It was not totally broken, but there was a bug in version 0.75 preventing me from accessing the Hassio page where i could then update to a non-buddy version. I found the issue page with a quick Google but as I’ve mentioned in my last post, I don’t have a screen or keyboard attached to my Raspberry Pi. It sits in a cupboard.

I’m writing this update on my phone at the airport, the beauty of GitHub is that it is available everywhere. To post or edit now I don’t need to be at my computer.

I have been playing with themes for this blog and there are a few cool ones I’d like to try out. One project by Phlow looks great feeling-responsive you can see the demo site.

Now that this blog is up and running for a whole week, I am starting to think about what to do next.

Not yet working - Updated The HTTPS and DNS forwarding are not working yet, so I’m going to do what we always do in situations like this tear it all down, go back and do it again. This time with knowledge I didn’t have the first time.

Yesterday I bought my domain name conortolan.com and I played around with domain forwarding quite successfully. Sadly, I have not been so successful with using setting the domain as my custom domain on GitHub. While I’ve gotten the basics working I am struggling a bit with HTTPS and mixed content. I am hoping that I just need to be patient and let the process happen with GitHub and LetsEncrpyt sorting it all out in the background as per the message below.

Today I took the advice of Gary Vaynerchuk and bought my domain name. This is a quick write up on how it went applying it to my GitHub Pages blog. I started out on GoDaddy and bought the domain name via PayPal. The two year order seemed reasonably priced at about $10 a year (side note why does conordean.com cost $899 because he’s an Irish rugby player), with the domain mine first thing I did was setup forwarding to try it out.

Today I found the perfect place for two Mi Light coloured bulbs.

So what happens in your house when you say “Hey Google, put up the blinds!”

This is the first post in my blog, so let me introduce myself. My name is Conor and I got my first computer when I was 11 or 12 and have never been far from one since.

MSc DevOps Azure Thinking-Out-Loud Jeykll-blog GCP Pipelines HomeAutomation BPApp VMware PowerShell Opensource Certified-Solutions-Architect Ansible AWS prisma nextjs cloud-run Learning Lambda HomeLab Hey-Google CSR C AWS-CSA thinking-out-loud tech-stack recharts pydanticai postgresql next-auth monorepo lessons-learned langgraph incident-report growth-charts gcp fatherhood docker deployment csv-import algorithms admin-dashboard Windows Thinking-out-loud Serverless SRE SEO RaspberryPi Rant Out-loud OpenFaaS MonkeyBars Mark-Schwartz MSC Japan Hassio GitHub-Pages Focus-Ireland Deliberate Charity Certbot